Table of Contents

Artificial intelligence (AI) models are reshaping society, the economy, and the human experience. Because of these models’ transformative effects, they are seen not as just another incremental innovation, but as a strategic, power-shifting technology with geopolitical implications.

For this reason, the development of AI models is the central technological priority of many global powers. The United States, China, and other leading nations have devoted considerable resources to scaling their sovereign AI stacks. However, competition between these powers to achieve so-called “superintelligence” is not always fair; recent findings from leading U.S. AI labs demonstrate how an otherwise industry-standard technical process known as “distillation” has been weaponized by their competitors to extract sensitive data with the goal of altering the balance of AI power.

While these distillation attacks represent the pinnacle of state-backed corporate espionage, the specific attack typologies reflect practices long employed by fraudsters across industries, including—most notably—location obfuscation techniques designed to trick access systems.

Protecting this strategically important intellectual property and preventing the unauthorized exploitation of AI models starts with a comprehensive anti-fraud framework. Robust fraud prevention starts with effective location verification.

What is AI Distillation?

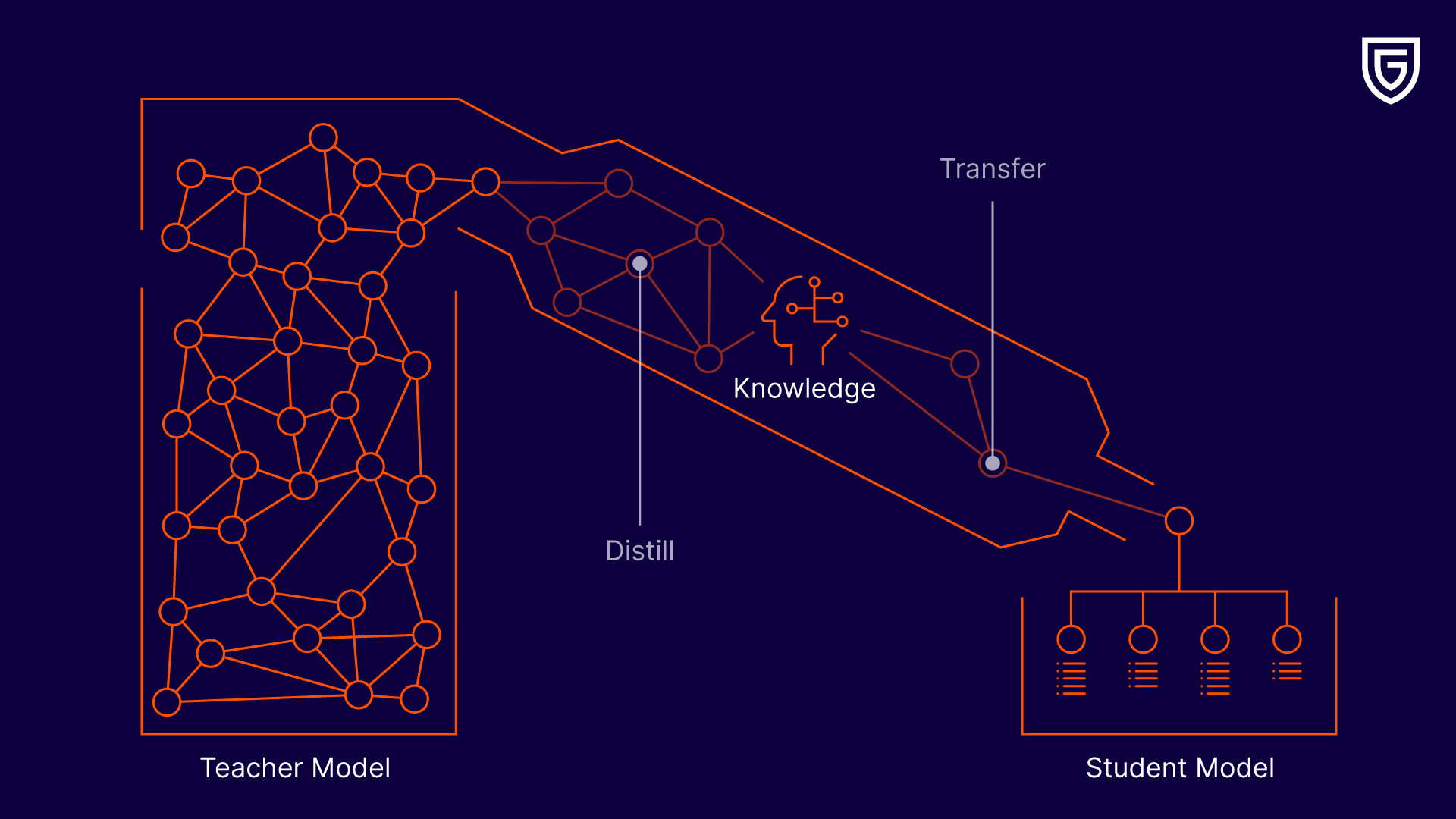

AI distillation is the process of training a smaller model on the outputs of a more sophisticated model. When used by AI companies to improve their own products, it allows for the creation of efficient models that don’t require the same compute resources.

However, distillation techniques can also be used by bad actors to automate massive data extraction from frontier models to steal proprietary intelligence, compromising intellectual property and using it to build cheaper competitors.

Behind the attack vectors targeting Anthropic, OpenAI, and Google

In February, leading AI labs Anthropic, OpenAI, and Google released information on vast fraudulent account networks used by Chinese AI labs, including DeepSeek, Moonshot AI, and others. In each of these cases, the U.S. labs alleged that fraudulent accounts, distributed through commercial proxy services, were able to engage in millions of authorized exchanges with the intent to illicitly distill LLM characteristics for their own AI development.

Specifically, “labs use commercial proxy services which resell access to Claude and other frontier AI models at scale. These services run what we call ‘hydra cluster’ architectures: sprawling networks of fraudulent accounts that distribute traffic across our API as well as third-party cloud platforms.”

Google’s findings support Anthropic’s claims, stating that the attackers employed “infrastructure and evasion tactics for their operations, including proxying phishing domains through Cloudflare to obscure the attacker IP addresses.” OpenAI simply states that the attackers used “new, obfuscated methods.”

Attackers employed “infrastructure and evasion tactics for their operations, including proxying phishing domains through Cloudflare to obscure the attacker IP addresses.”

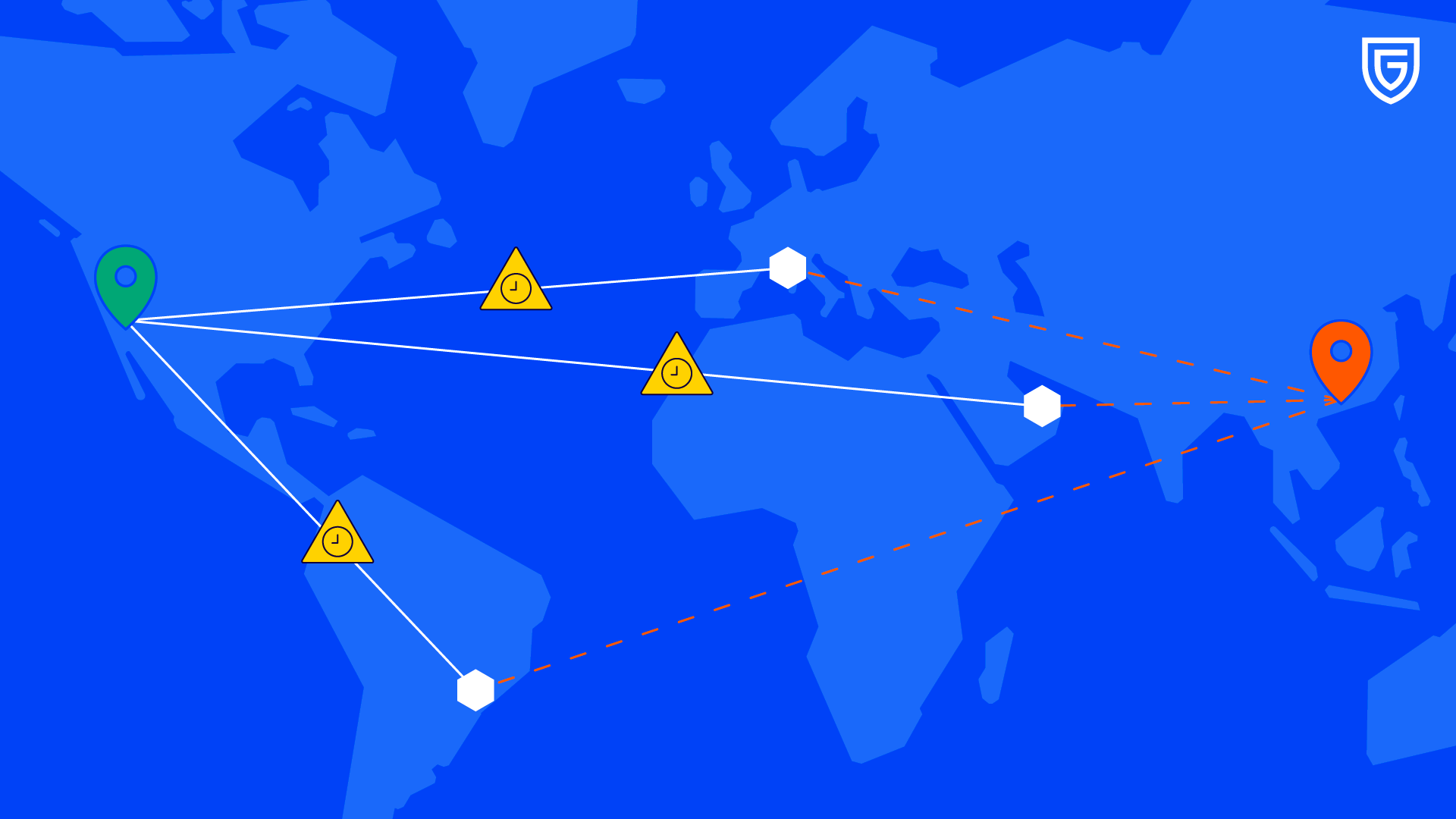

In summary, without obfuscation methods, attackers cannot engage in illicit model distillation from a physical location within China. For both risk mitigation and national security reasons, AI labs will treat traffic originating from China as, at a minimum, high-risk, if not completely off-limits.

Therefore, attackers will obfuscate their IP addresses to appear as though the true API requests are originating from a location outside of China. However, a high volume of traffic emanating from a single IP address will also indicate suspicious behavior. For illicit distillation to work effectively, attackers need to utilize a large, geographically dispersed volume of non-Chinese IP addresses.

Ultimately, extraction code, running on hardware physically located in China, will be routed via fraudulent accounts through a vast network of overseas servers, where it will engage in illicit API calls to distill model characteristics.

IP verification & location precision enrichment: A technical defense playbook to block illicit API access

Clearly, the sophistication of these attacks, affecting numerous leading AI labs, means current defenses are not sufficiently robust. In the aftermath of these attacks, Peter Wildeford, Head of Policy at the AI Policy Network and a leading AI forecaster, laid out a comprehensive proposal with a critical recommendation: better enforcement of geographic access restrictions, informed by “analyzing patterns like VPN usage … and account creation behaviors that correlate with proxy network operations.”

Such a recommendation may seem simple, but ensuring that it is implemented properly requires a level of geolocation sophistication exceeding that of the labs’ advanced adversaries.

Peter Wildeford, Head of Policy at the AI Policy Network, laid out a comprehensive proposal with a critical recommendation: better enforcement of geographic access restrictions, informed by “analyzing patterns like VPN usage … and account creation behaviors that correlate with proxy network operations.”

Ideally, to mitigate the risk from obfuscated high-risk accounts, platforms should be able to identify suspicious proxy activity in real time and at scale. With the use of effective location verification tools, this is highly realistic.

First, any platform accessible through an API should be collecting and analyzing IP address information from account holders. At a minimum, this information should be cross-referenced against VPN databases, as traffic routing through a VPN may be high-risk, especially if such is associated with suspicious devices. VPN databases can contain information on hundreds of millions of VPN endpoints, Tor exit nodes, and proxies. Such data, in the hands of an AI lab, can serve as an essential layer for controlling access to their APIs.

However, bad actors are resourceful. While existing tools are robust at detecting standard VPN traffic, sophisticated cyber actors will seek out non-suspicious and uncompromised IPs—such as residential IP addresses associated with normal homes and businesses—rather than data centers.

Therefore, AI labs need to employ cutting-edge location verification and spoofing detection tools to stay guarded against the most deceptive accounts. AI labs have access to a critical data point that can help them sift through the noise and find nefarious actors in a sea of ostensibly uncompromised IP addresses: latency.

Generally speaking, the time it takes for a signal to travel from one point to another over physical internet infrastructure is fairly predictable. Because signals travel at nearly the speed of light, the observed time it takes for a signal to travel between two points can be converted into a possible distance. Traffic that genuinely originates at its purported IP address and communicates with a server in another location will travel the expected distance between the two locations in a predictable time.

However, traffic illicitly routed through a particular IP address will take a longer-than-expected time to travel between the two points, as it doesn’t just have to travel between the server and the location of the apparent IP address, but also from the apparent IP address to the true origin of the API request. By comparing expected travel times with observed travel times, platforms can detect spoofing that normal VPN databases will miss.

Conclusion

Adversarial distillation has the potential to fundamentally shift the balance of AI power. Effectively detecting the signatures of a distillation attack is essential to stopping these threats. While no one solution is a silver bullet, Latency-based spoofing detection is an essential technique for determining, and subsequently halting, deceptive behavior.

Anthropic, “Detecting and preventing distillation attacks,” Anthropic, February 23, 2026, https://www.anthropic.com/news/detecting-and-preventing-distillation-attacks.

Google Threat Intelligence Group, “GTIG AI Threat Tracker: Distillation, Experimentation, and (Continued) Integration of AI for Adversarial Use,” Google Cloud Blog, February 12, 2026, https://cloud.google.com/blog/topics/threat-intelligence/distillation-experimentation-integration-ai-adversarial-use.

OpenAI, “OpenAI US House Select Cmte Update [021226],” February 12, 2026, hosted by Bloomberg, https://assets.bwbx.io/documents/users/iqjWHBFdfxIU/rRmql_jJcxb4/v0.

Peter Wildeford, “China Is Reverse-Engineering America’s Best AI Models,” Substack, March 16, 2026, https://peterwildeford.substack.com/p/china-is-reverse-engineering-americas.

Jake Hulina | Government Relations Manager, GeoComply

Jake is the Government Relations Manager for New Verticals at GeoComply. In this role, he works on policy issues related to technology, financial services, security, and other emerging use cases.